安装

docker单机安装

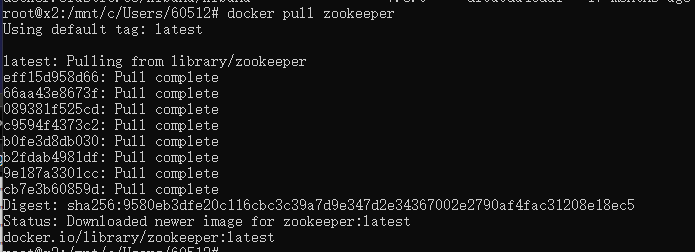

下载镜像

# 默认拉取最新的版本,如要指定版本 zookeeeper:版本号

docker pull zookeeper

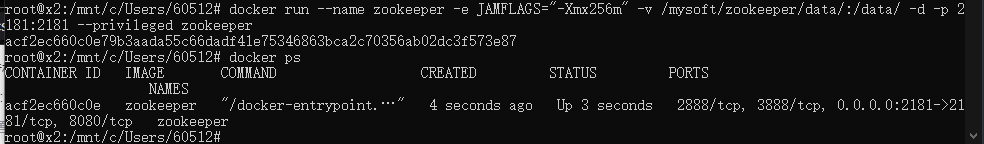

创建并运行容器

docker run --name zookeeper -e JAMFLAGS="-Xmx256m" -v /mysoft/zookeeper/data/:/data/ -d -p 2181:2181 --privileged zookeeper

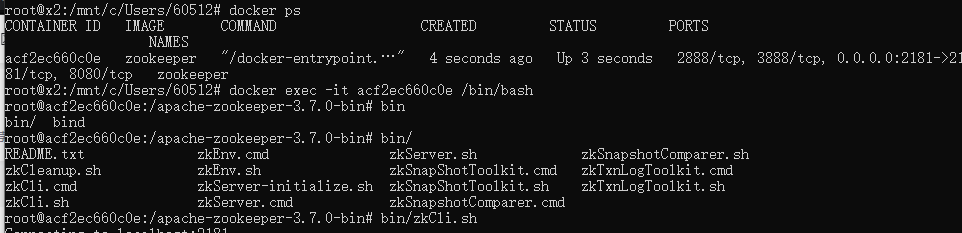

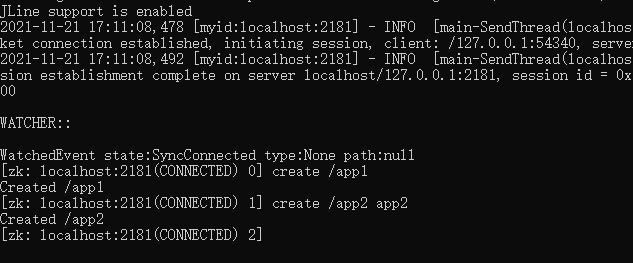

使用zk客户端创建节点

# 进入容器

docker exec -it acf2ec660c0e /bin/bash

# 开启zk客户端

bin/zkCli.sh

# 创建一个节点

create /app2 app2

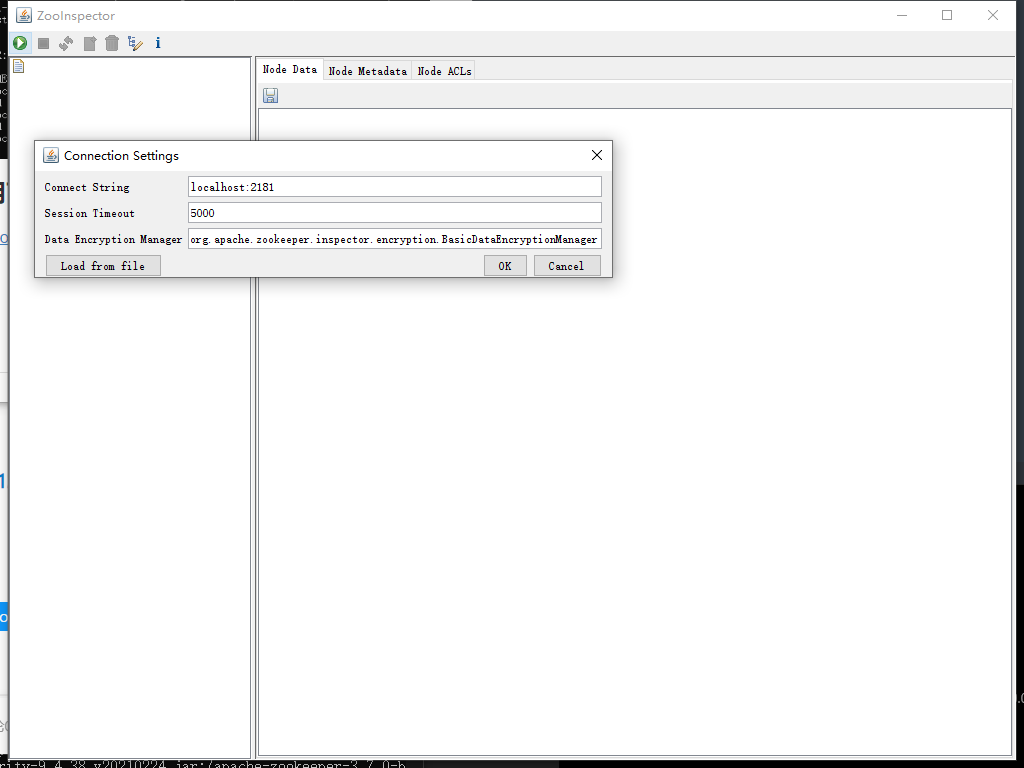

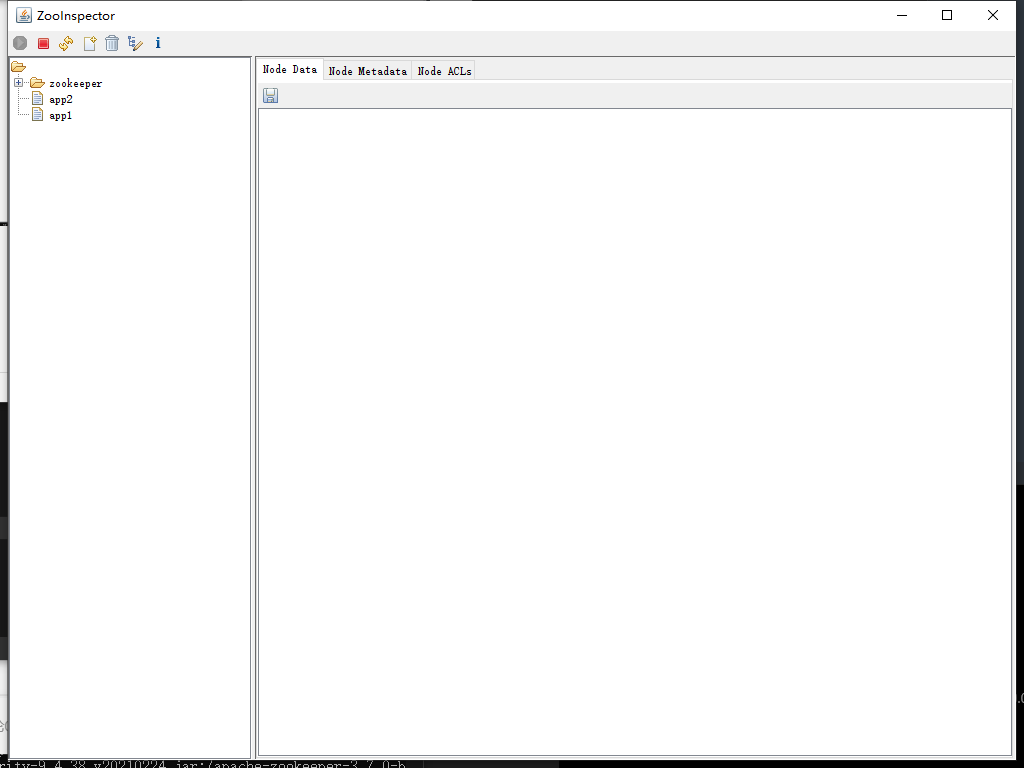

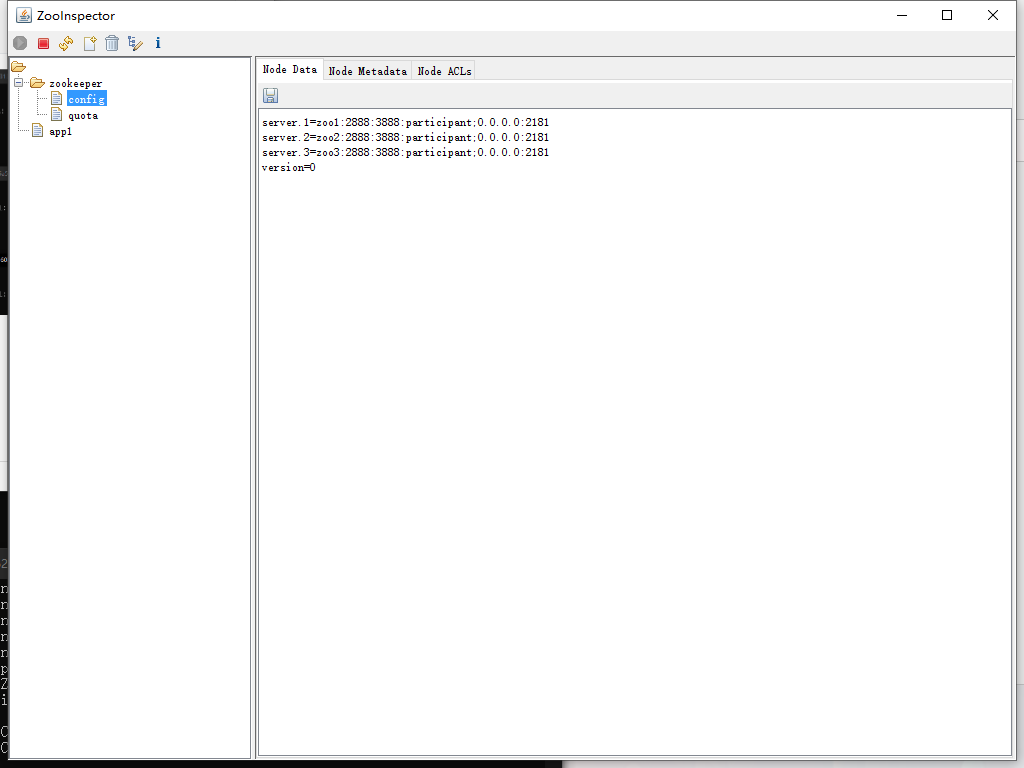

使用可视化工具ZooInspector 观察结果

下载ZooInspector .并解压后运行zookeeper-dev-ZooInspector.jar

连接并设置参数即可。

docker集群版(一)

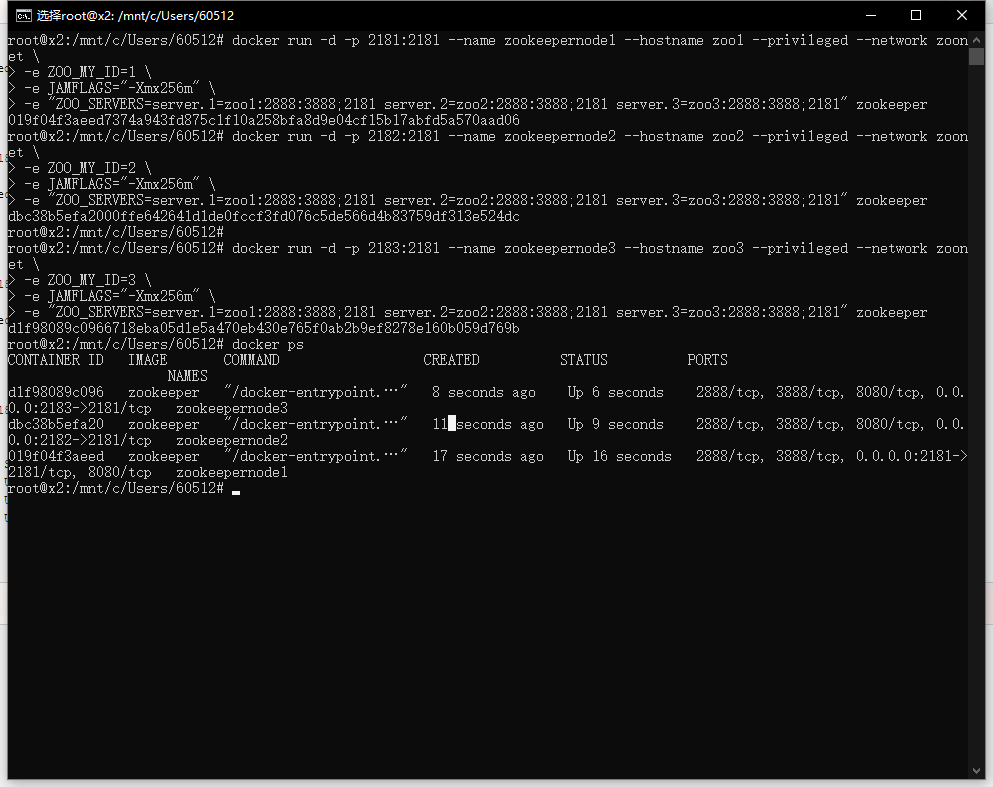

创建网络环境,并运行容器

# 创建一个桥接网络 用于zookeeper集群通信。

docker network create --driver bridge zoonet

# 创建三个容器,在一个桥接网络下,并分别映射到主机的不同端口,同时配置hostname不同,zoo的my_id不同,并根据hostname组建集群。

docker run -d -p 2181:2181 --name zookeepernode1 --hostname zoo1 --privileged --network zoonet \

-e ZOO_MY_ID=1 \

-e JAMFLAGS="-Xmx256m" \

-e "ZOO_SERVERS=server.1=zoo1:2888:3888;2181 server.2=zoo2:2888:3888;2181 server.3=zoo3:2888:3888;2181" zookeeper

docker run -d -p 2182:2181 --name zookeepernode2 --hostname zoo2 --privileged --network zoonet \

-e ZOO_MY_ID=2 \

-e JAMFLAGS="-Xmx256m" \

-e "ZOO_SERVERS=server.1=zoo1:2888:3888;2181 server.2=zoo2:2888:3888;2181 server.3=zoo3:2888:3888;2181" zookeeper

docker run -d -p 2183:2181 --name zookeepernode3 --hostname zoo3 --privileged --network zoonet \

-e ZOO_MY_ID=3 \

-e JAMFLAGS="-Xmx256m" \

-e "ZOO_SERVERS=server.1=zoo1:2888:3888;2181 server.2=zoo2:2888:3888;2181 server.3=zoo3:2888:3888;2181" zookeeper

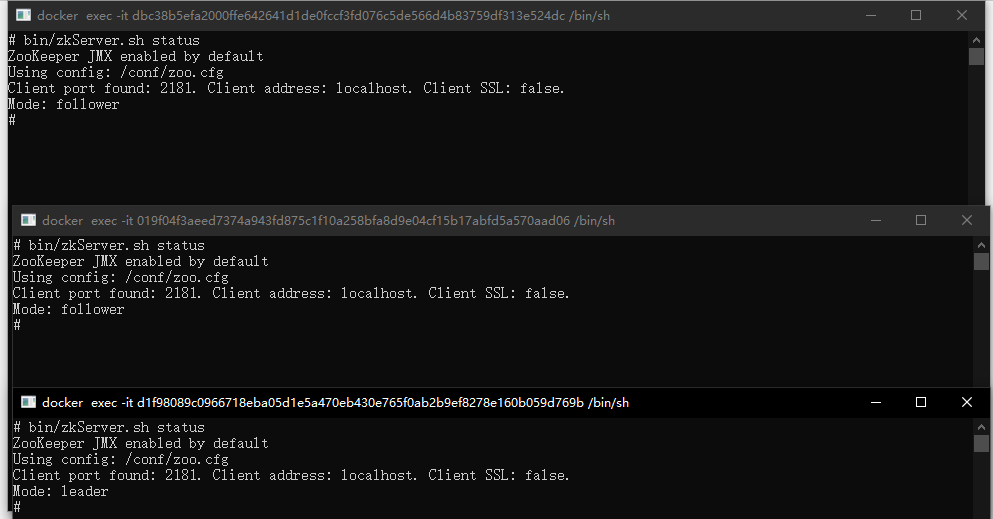

查看各服务状态

#进入容器

docker exec -it 容器id /bin/bash

# 查看服务状态

bin/zkServer.sh status

使用ZooInspector 观察结果

docker集群版(二)

便于管理

编写compose文件

version: '3.1'

services:

zoo1:

image: zookeeper

restart: always

privileged: true

hostname: zoo1

ports:

- 2181:2181

volumes: # 挂载数据

- /usr/local/zookeeper-cluster/node4/data:/data

- /usr/local/zookeeper-cluster/node4/datalog:/datalog

environment:

ZOO_MY_ID: 4

ZOO_SERVERS: server.4=0.0.0.0:2888:3888;2181 server.5=zoo2:2888:3888;2181 server.6=zoo3:2888:3888;2181

networks:

default:

ipv4_address: 172.18.0.14

zoo2:

image: zookeeper

restart: always

privileged: true

hostname: zoo2

ports:

- 2182:2181

volumes: # 挂载数据

- /usr/local/zookeeper-cluster/node5/data:/data

- /usr/local/zookeeper-cluster/node5/datalog:/datalog

environment:

ZOO_MY_ID: 5

ZOO_SERVERS: server.4=zoo1:2888:3888;2181 server.5=0.0.0.0:2888:3888;2181 server.6=zoo3:2888:3888;2181

networks:

default:

ipv4_address: 172.18.0.15

zoo3:

image: zookeeper

restart: always

privileged: true

hostname: zoo3

ports:

- 2183:2181

volumes: # 挂载数据

- /usr/local/zookeeper-cluster/node6/data:/data

- /usr/local/zookeeper-cluster/node6/datalog:/datalog

environment:

ZOO_MY_ID: 6

ZOO_SERVERS: server.4=zoo1:2888:3888;2181 server.5=zoo2:2888:3888;2181 server.6=0.0.0.0:2888:3888;2181

networks:

default:

ipv4_address: 172.18.0.16

networks: # 自定义网络

default:

external:

name: zoonet2

执行compose文件

docker-compose -f docker-compose.yml up -d

**停止zookeeper集群 **

docker-compose stop

**删除zookeeper集群 **

docker-compose rm

实体机安装zookeeper

下载zookeeper

从apache官网目录选择自己想要的版本,下载-bin.tar.gz包

有bin名称的包里面有编译后的二进制的包,而之前的普通的tar.gz的包里面是只是源码的包无法直接使用。

解压zookeeper并配置文件

# 解压

tar -zxf zookeeper-xxx-bin.tar.gz -C <dir>

# 复制一份配置文件

cp zoo-simple.cfg zoo.cfg

配置文件

# The number of milliseconds of each tick

# 与其他服务间的通信时间,作为单位

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

# 首次leader与follow连接时,允许等待initLimit*tickTime的时间。即10个tickTime的等待时长。

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

# 同步等待时常5个tickTime。

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

# 文件存储目录

dataDir=/data/zookeeper/single-3.7.0

# the port at which the clients will connect

# 客户端连接使用的端口号

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

# 当前客户端允许的最大连接数

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

## Metrics Providers

#

# https://prometheus.io Metrics Exporter

#metricsProvider.className=org.apache.zookeeper.metrics.prometheus.PrometheusMetricsProvider

#metricsProvider.httpPort=7000

#metricsProvider.exportJvmInfo=true

创建需要的目录

# -p 创建多级目录 此目录为zookeeper文件存储目录

mkdir -p /data/zookeeper/single3.7.0

启动服务

/opt/zookeeper/single-3.7.0/bin/zkServer.sh --config /opt/zookeeper/single-3.7.0/conf start

结果显示:

ZooKeeper JMX enabled by default

Using config: /opt/zookeeper/single-3.7.0/conf/zoo.cfg

Starting zookeeper ... STARTED

停止服务

/opt/zookeeper/single-3.7.0/bin/zkServer.sh --config /opt/zookeeper/single-3.7.0/conf stop

结果显示:

ZooKeeper JMX enabled by default

Using config: /opt/zookeeper/single-3.7.0/conf/zoo.cfg

Stopping zookeeper ... STOPPED

安装zookeeper集群

以四台为例

与单台安装步骤差不多。

解压zookeeper并配置文件

# 解压

tar -zxf zookeeper-xxx-bin.tar.gz -C <dir>

# 复制一份配置文件

cp zoo-simple.cfg zoo.cfg

在zoo.cfg中加入集群节点配置信息

# 集群节点列表,2888为连接通信用端口,3888为投票选举用端口

server.1=node01:2888:3888

server.2=node02:2888:3888

server.3=node03:2888:3888

server.4=node04:2888:3888

设置各个节点编号,以node1为例

mkdir -p /data/zookeeper/zk

echo 1 > /data/zookeeper/zk/myid

启动服务

# 分别在四台机子上执行

zkServer.sh start

查看节点主从

zkServer.sh status

启动客户端验证

zkCli.sh

查看帮助命令

help

ZooKeeper -server host:port -client-configuration properties-file cmd args

addWatch [-m mode] path # optional mode is one of [PERSISTENT, PERSISTENT_RECURSIVE] - default is PERSISTENT_RECURSIVE

addauth scheme auth

close

config [-c] [-w] [-s]

connect host:port

create [-s] [-e] [-c] [-t ttl] path [data] [acl]

delete [-v version] path

deleteall path [-b batch size]

delquota [-n|-b|-N|-B] path

get [-s] [-w] path

getAcl [-s] path

getAllChildrenNumber path

getEphemerals path

history

listquota path

ls [-s] [-w] [-R] path

printwatches on|off

quit

reconfig [-s] [-v version] [[-file path] | [-members serverID=host:port1:port2;port3[,...]*]] | [-add serverId=host:port1:port2;port3[,...]]* [-remove serverId[,...]*]

redo cmdno

removewatches path [-c|-d|-a] [-l]

set [-s] [-v version] path data

setAcl [-s] [-v version] [-R] path acl

setquota -n|-b|-N|-B val path

stat [-w] path

sync path

version

whoami